In an unmistakable advance notice reverberating through the lobbies of force, the White House has raised worries over the multiplication of express computer-based intelligence created pictures portraying famous vocalist Taylor Quick. Debates about the ethical implications of AI technology and the urgent need for regulatory measures to address its misuse have rekindled as a result of the emergence of these manipulated visuals, which were created with the help of algorithms developed by artificial intelligence. As the digital landscape becomes increasingly fraught with such threats, there is a compelling case for Congress to step in and enact comprehensive legislation to safeguard individuals’ privacy and combat the spread of harmful AI-generated content.

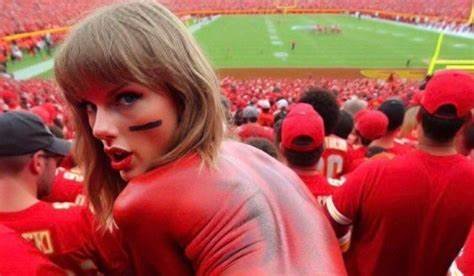

The phenomenon of AI-generated “deepfake” images, capable of seamlessly superimposing individuals’ faces onto explicit or misleading content, poses a grave threat to personal privacy, reputation, and online safety. Taylor Swift, a global icon with a massive fan following, has found herself at the center of this disturbing trend, as face-swapped images depicting her in compromising scenarios circulate across social media platforms and online forums. These manipulated visuals, often indistinguishable from authentic photographs, have the potential to inflict irreparable harm to Swift’s image and undermine public trust in the authenticity of digital content.

The White House’s intervention underscores the severity of the situation and underscores the urgent need for concerted action to address the proliferation of AI-generated deepfakes. Press Secretary Sarah Huckabee Sanders, in a statement addressing the issue, emphasized the administration’s commitment to combatting malicious uses of AI technology and protecting individuals’ privacy rights. The call to action extends beyond mere rhetoric, signaling a determination to confront this emerging threat head-on through a combination of legislative, technological, and educational measures.

At the heart of the debate lies the question of accountability and legal recourse in the face of AI-generated content that blurs the boundaries between reality and fabrication. Existing laws and regulations pertaining to defamation, privacy rights, and intellectual property struggle to keep pace with the rapid advancements in AI technology, leaving individuals vulnerable to exploitation and manipulation. Congress must seize the opportunity to enact robust legislation that explicitly addresses the creation, distribution, and dissemination of deepfake content, empowering law enforcement agencies and judicial authorities to prosecute offenders and deter future abuses.

Moreover, the issue transcends the realm of individual privacy and reputation, raising broader concerns about the societal impact of AI-generated misinformation and its potential to sow discord, undermine trust, and manipulate public discourse. The proliferation of deepfake technology threatens to erode the very fabric of democracy by fostering a climate of distrust and uncertainty, where truth becomes increasingly elusive amid a sea of digitally manipulated falsehoods. In an era marked by rampant disinformation and online manipulation campaigns, safeguarding the integrity of digital content is paramount to preserving the foundations of a healthy and informed society.

Congressional action is essential to address this multifaceted challenge comprehensively, drawing upon interdisciplinary expertise and stakeholder input to formulate effective policy responses. Legislation should prioritize the protection of individuals’ privacy rights and reputational integrity, while also fostering innovation and responsible AI development. Key provisions may include mandatory disclosure requirements for AI-generated content, enhanced penalties for malicious deepfake creation and distribution, and the establishment of dedicated task forces to monitor emerging threats and coordinate enforcement efforts.

Furthermore, education and public awareness initiatives are integral to empowering individuals to discern fact from fiction in an increasingly digitized world. By promoting media literacy, critical thinking skills, and digital hygiene practices, policymakers can empower citizens to navigate the digital landscape with greater discernment and resilience against manipulation tactics. Collaboration between government, industry, academia, and civil society is essential to foster a culture of responsible AI usage and uphold ethical standards in the development and deployment of AI technologies.

In addition, the White House’s warning regarding AI-generated Taylor Swift photos serves as a wake-up call to the urgent need for legislative action to address the proliferation of deepfake content. Congress has a pivotal role to play in crafting comprehensive solutions that safeguard individuals’ privacy rights, preserve the integrity of digital content, and uphold the principles of truth and transparency in the digital age. By taking proactive measures to regulate AI technology responsibly, lawmakers can mitigate the risks posed by malicious deepfakes and foster a safer, more trustworthy online environment for all.